I’ve been wanting to write a post about my experience building systems to block bots and scrapers for a while. I keep postponing because I don’t know the best way to structure it. So, I’ve decided to just do a brain dump of all the techniques I’ve encountered and their effectiveness. Here it goes.

IPs

Blocking an IP is only a short-term fix as bots can easily switch to another.

ASNs

Firewall vendors (eg Cloudflare) give you the Autonomous System Number (ASN) for the IP that made the request. You can use it to identify hosting services; DigitalOcean’s ASN 14061 has quite a reputation. And they’re usually provided in the cheapest tiers; Cloudflare even offers it in the free plan.

Compared to IPs, blocking ASNs is a more effective way of blocking scrapers, while still keeping costs low. Unfortunately it doesn’t cost malicious actors much to hide behind residential proxies either.

Residential proxies and other kinds of IP databases

There are paid services out there (eg IPInfo) that tell you whether an IP belongs to either a residential proxy or a hosting provider, or has been flagged because it runs abusive/malicious services.

This approach offers broader coverage compared to picking ASNs, one by one. But you risk blocking legitimate users who share the IP on which the proxy sits.

There are means to bypass this, too. At the end of the day, any personal device hooked up to a residential ISP can be leveraged as a proxy. Some people set up their devices willingly, in exchange for money. Others are unaware that they have some software running in the background that turns their device into a proxy node, often installed through bundled apps or malware.

User Agent header

Basic scrapers will show something obvious like python-requests/2.31.0, which you can act upon in your firewall rules.

The problem is that it’s trivial to overwrite this header to something that looks a legitimate browser.

JA4 hash & other client fingerprinting

Firewall vendors provide the JA4 signatures of the clients accessing your site as part of their premium packages. Then there’s the likes of FingerprintJS which looks at various other aspects of your browser.

These solutions are far more precise than relying on the User Agent headers. That comes at a cost, though. If the bot maintainer puts in the effort, they can eventually mask their fingerprint as well; at which point you’re back to playing whack-a-mole.

CAPTCHA, Cloudflare Turnstile, and other kinds of challenges

These work pretty well, assuming you’re ok with adding a little bit of friction for users.

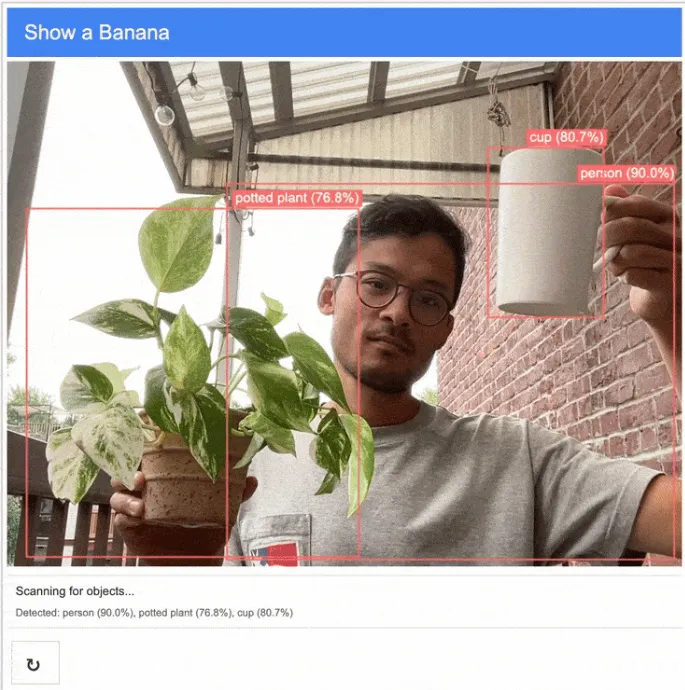

There’s still software out there that can bypass this, of course. But, if you’re very motivated, you can also build your own CAPTCHA solution. Check out this prototype I spotted on Reddit, it always cracks me up.

The end

There are more techniques to cover, but I’ll stop here as I don’t like making my blog posts too lengthy. If there’s interest, I’ll continue my ramblings in another post.